SMTP honeypots: Extracting events and decoding MIME headers with Logstash

One of my honeypots runs INetSim which, among many other services, emulates an SMTP server. The honeypot is frequently used by spammers who think they’ve found a mail server with easily guessed usernames and passwords. Obviously I’m logging the intruders’ activities, so I’m shipping the logs to Elasticsearch using Filebeat.

Shipping the regular INetSim activity logs is easy, but extracting the SMTP headers from the mail server honeypot was more of a challenge. INetSim logs each mail’s full content (header and body) in a file, by default on a Debian based system this file is /var/lib/inetsim/smtp/smtp.mbox. Every mail has a header and a body. The start of a header block is indicated by a first line starting with “From”, and the header ends with an empty linefeed followed by the mail body. After the mail’s body there’s a new empty linefeed, separating that mail from the next.

(Clip art courtesy of Clipart Library)

Extracting body content from an endless number of spam emails has limited value, but the headers are interesting. Information extracted from these can for instance be used to prime and improve spam filters as well as to identify and report compromised systems.

The following Filebeat multiline configuration extracts the interesting parts of each email:

filebeat:

inputs:

-

paths:

- "/var/lib/inetsim/smtp/smtp.mbox"

type: log

scan_frequency: 10s

tail_files: true

include_lines: ['^From [\w.+=:-]+(@[0-9A-Za-z][0-9A-Za-z-]{0,62}(?:[\.](?:[0-9A-Za-z][0-9A-Za-z-]{0,62}))*)?(?:<.*>)? ']

multiline.pattern: '^$'

multiline.negate: true

multiline.match: afterWhat happens in this config section is that every block of text between empty linefeeds is concatenated into single lines. That leaves us with one long line for the header and one long line for the body for every logged email. Then, because of the include_lines stanza, the config only cares about the lines starting with From followed by an email address, shipping only those to the Logstash server configured to receive logs from the honeypot.

On the Logstash side, I’m first extracting what was the first of the header lines, i.e. the From address and the email’s timestamp, then I’m storing the rest of the content in a variable named smtpheaders.

Then I’m parsing the email’s timestamp, using this as the authoritative timestamp for this event.

After some cleanup, the contents of the smtpheaders variable, converted by Filebeat into one single line with the linefeed character \n to separate each header line, are extracted using the key/value (kv) plugin. This plugin puts each whitelisted header into a nested field under the smtp parent field, i.e. the email’s subject header is stored in Elasticsearch as [smtp][subject]. When all headers are mapped, the smtpheaders variable is no longer useful so it’s deleted.

filter {

# Extract the (fake) sender address, the timestamp,

# and all the other headers

grok {

# Note two spaces in From line

match => { "message" => [

"^From %{EMAILADDRESS:smtp.mail_from}\s+%{MBOXTIMESTAMP:mboxtimestamp}\n%{GREEDYDATA:smtpheaders}",

"^From %{USERNAME:smtp.mail_from}\s+%{MBOXTIMESTAMP:mboxtimestamp}\n%{GREEDYDATA:smtpheaders}",

"^From %{GREEDYDATA:smtp.mail_from}\s+%{MBOXTIMESTAMP:mboxtimestamp}\n%{GREEDYDATA:smtpheaders}"

]

}

pattern_definitions => {

"MBOXTIMESTAMP" => "%{DAY} %{MONTH} \s?%{MONTHDAY} %{TIME} %{YEAR}"

}

remove_field => [ "message" ]

}

# Extract the date from the email's header

date {

match => [ "mboxtimestamp",

"EEE MMM dd HH:mm:ss yyyy",

"EEE MMM d HH:mm:ss yyyy" ]

}

# Replace indentation in Received: header and possibly others

mutate {

gsub => [ "smtpheaders", "\n(\t|\s+)", " " ]

}

# Map interesting header variables into an object named "smtp"

kv {

source => "smtpheaders"

value_split => ':'

field_split => '\n'

target => "smtp"

transform_key => "lowercase"

trim_value => " "

include_keys => [

"content-language",

"content-transfer-encoding",

"content-type",

"date",

"envelope-to",

"from",

"mail_from",

"message-id",

"mime-version",

"rcpt_to",

"received",

"return-path",

"subject",

"to",

"user-agent",

"x-inetsim-id",

"x-mailer",

"x-priority"

]

remove_field => ["smtpheaders"]

}

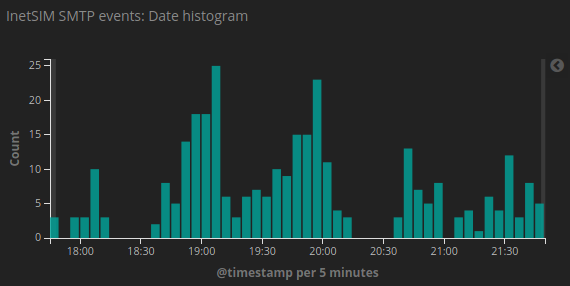

}With the above configuration, INetSim provides a steady stream of events – one for every spam or phishing mail the intruders submit. Obviously the mail goes right into /dev/null so their efforts are completely wasted, while I’m getting nice Kibana dashboards telling how much spam my honeypot has caught (and deleted) today.

There’s one more thing, though. Each email usually has two From addresses; one often referred to as envelope-from (this is the first header line), and one called the header-from (the difference is explained here). While the envelope-from is a simple email address, the header-from can be almost anything including all kinds of international characters. Because an email header only allows a limited set of character, the header-from is often MIME encoded. For instance, an email from me would look like this:

From: Bjørn

But in the email header it would say:

From: =?UTF-8?Q?Bj=c3=b8rn?=

Since these headers aren’t decoded automatically, and since Logstash has no native decoding function for MIME, a small Logstash filter trick needs to be implemented. Logstash allows inline Ruby code, and the Ruby codebase provided with Logstash includes the mail gem, which in turn provides a MIME decode function. So I added the following to the Logstash filter:

if [smtp][from] {

ruby {

init => "require 'mail'"

code => "event.set('[smtp][from_decoded]',

Mail::Encodings.value_decode(event.get('[smtp][from]')))"

}

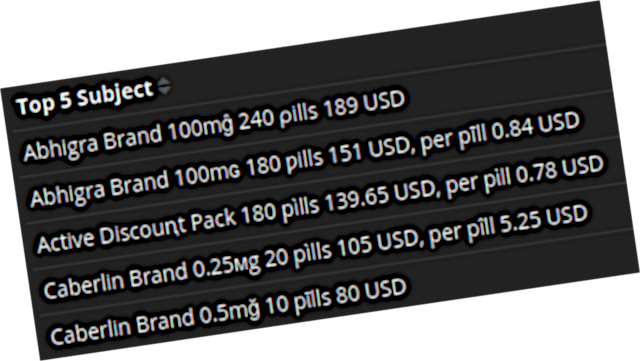

}The code first makes sure the mail gem is loaded, then it creates a new nested field [smtp][from_decoded] and inserts the value of [smtp][from] after applying Ruby’s Mail::Encodings.value_decode function. Equal decoding also happens on a few other headers, including the Subject header.

With this in place, my honeypot Kibana dashboard displays all the headers properly, and I can read spam subjects like “Cîalis Professional 20ϻg 60 pȋlls” instead of wondering what “=?UTF-8?Q?C=C3=AEalis?= Professional =?UTF-8?Q?20=CF=BBg?= 60 =?UTF-8?Q?p?= =?UTF-8?Q?=C8=8Blls?=” really means.